The decisions

No hand skeleton. My first instinct was to render MediaPipe's landmark points as a visible overlay, dots and lines showing the tracking in real time. I cut it immediately because the moment you can see the mechanism, the illusion collapses. The orb and particles are the only evidence anything is happening, and the technology has to disappear.

Thermal color, not rainbow. A rainbow ramp reads as tech demo, since it signals "look what this can do" rather than "feel what this is." A thermal ramp from deep purple at rest through red-orange and gold up to white-hot reads as energy, and purple as the cool state felt magical where blue would have felt clinical. Color is doing the emotional work.

Full-screen camera, not a widget. A small mirror in the corner makes the effect feel like a feature. Full-screen puts you inside the experience, so you're not looking at a tool, you're in a room with an orb. That shift in scale is what makes the whole thing feel architectural instead of gimmicky.

Discovery over instruction. There's no tutorial anywhere on the page. You show your hands and something sparks. You bring them together and it charges. You figure out you can throw it. The experience tracks which gestures you've found in-session and only surfaces hints for the ones you haven't, so the interface feels like it's paying attention to you specifically. Finding each mechanic on your own is the experience.

Fiction as design language. The telemetry numbers (energy percentage, plasma temperature, containment status, throw velocity) are completely made up. There's no real frequency, no actual temperature threshold. But I made them up carefully, because the metrics were the language I used to think through how the orb should look and feel at each charge state. Energy maps to color, frequency maps to how the surface pulses, and temperature is why it shifts from purple to white-hot. The values are invented but they correlate exactly with what's on screen, which is what makes the readouts feel credible.

Japanese as authorship, not decoration. Widget headers run in Japanese with monospaced English subtitles beneath: 気力測定 / ORB TELEMETRY, 手の動き / HAND METRICS. The page title is a single kanji. A small watermark credits 気力研究所, a completely fictional Ki Energy Research Institute. These aren't translation choices, they set a register: terse, slightly ceremonial, like the object has its own institutional backstory. People who read Japanese catch a different layer of the joke. People who don't just think it's a design choice.

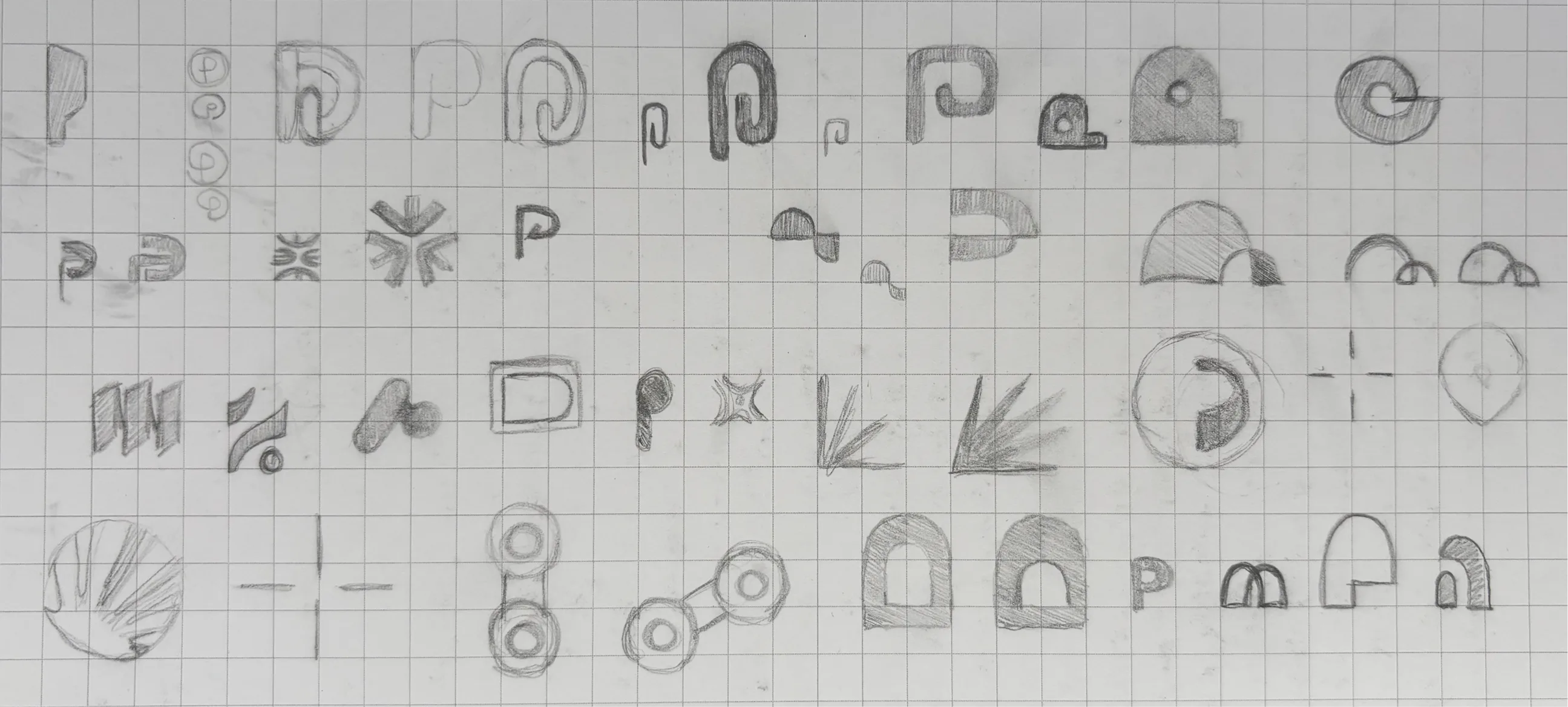

Monospaced throughout, no display type. The original build spec included Bangers, the chunky comic font, as a nod to the source material. I replaced it with Space Mono across everything because Bangers reads as costume; mono type reads as instrument. The gap between "I know what this is referencing" and "this looks like a fan site" is entirely typographic.

.jpg)

.jpg)

.jpg)